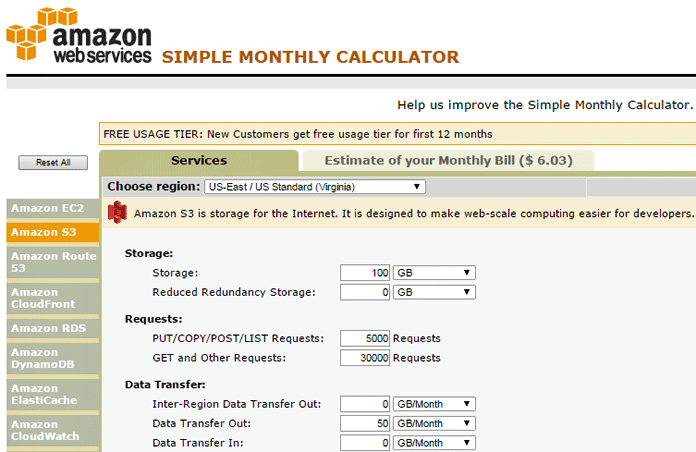

S3 charges were based on the total GB, not the number of objects.5306220 Overhead per Glacier Object There is one “archive request” for each S3 object that is transitionedįrom S3 to Glacier, and I had over five million objects in theseīuckets, something I didn’t worry about previously because my monthly Objects into S3, which is $0.01 per 1,000 PUT requests.

This is five times as expensive as the initial process of putting Glacier Archive and Restore Requests: $0.05 per 1,000 requests Transitioning the objects from S3 to Glacier. It had not occurred to me that there would be much of a charge for The line item on the AWS Activity report showed the source Showed that this increase was due to one time charges, so I wasn’t This is one of my personal accounts, so a rate of several hundredĭollars a day is not sustainable. Monitor on this account letting me know that I had just passed $200įor the month, followed a few hours later by an alert for $300, What I did not expect was an email alert from my AWS billing alarm Since all of the objects in the buckets were more than 60 days old, IĮxpected them to be transitioned to Glacier within a day, and true toĪmazon’s documentation, this occurred on schedule. Useful S3 tools that include the required functionality.) Note: Limitations of AWS API Gateway in terms of request size are documented here.(Long time readers of this blog may be surprised I didn’t list theĬommand lines to accomplish this task, but Amazon has not yet released Overall, this approach bypassed the limitations of the standard connector and achieved the desired file upload functionality. In addition, I created a Power Automate Flow using my custom connector to upload CSV files into my AWS S3 Bucket. In addition, I secured my API with an API key.įinally, I have set up a custom connector for my API. I also described the setup of the necessary IAM execution role and policy. Furthermore, I created a REST API and configured a PUT method for file uploads to the S3 Bucket. To simplify my API accessibility, I used an AWS API Gateway. Moreover, I discovered that authentication with AWS Signature Version 4 would be challenging a custom connector. My solution was to set up a custom connector for the S3 Bucket in the Power Platform. The standard Amazon S3 connector lacked the capability to upload files, so I had to find an alternative solution.

To summarize, I first encountered a problem with Power Automate Flow while trying to upload CSV files to an AWS S3 Bucket. You see the result, when I switch to the Swagger editor of my custom connector and navigate to the method preview: For that reason, I use that definition: parameters: In other words, my content is a text file. My second parameter is file and stores the content of my file into the request body. The first parameter is filename, part of the service route and used for the S3 object name. My method Upload consumes two parameters. Note: You must change the values of host and basePath to the values from your deployed API Gateway. For that reason, here is my definition: swagger: '2.0'ĭescription: 'API to upload files to an AWS S3 Bucket' The setup of a custom connector is straightforward when you can use a service definition. I start my example from my AWS Management Console where my AWS S3 Bucket mme2k-s3-upload already exists. Yes, this is exactly the component I need for this task. For that reason, I use an AWS API Gateway.Īn API Gateway is a fully managed service in AWS cloud that enables developers to create, publish, maintain, monitor, and secure APIs. But here I work with components in the AWS cloud. In Microsoft Azure I would choose an API Management Gateway for this task. AWS API Gatewayįor that reason, I must simplify the accessibility of my AWS S3 Bucket API. Hmm, there is no out-of-the-box solution to do this with a custom connector in Power Platform. This signing process involves creating a canonicalized version of my request, which includes the request’s path, headers, and body. In detail, Amazon uses a lot of generated checksums, specifically Hash-based Message Authentication Codes (HMAC). In other words, I must authenticate all my requests with the AWS Signature Version 4. However, to invoke these API’s, I must set up the authentication for my request in my custom connector. Per example I can store a new object in my AWS S3 bucket from C#. In addition, I can use these APIs from my services. Each AWS cloud service provides a REST API, and this is great.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed